Managing big data has become a challenge for many companies. Whether your company is small or big, you need to use the best big data tools to manage your data.

In this case, companies are looking for employees who are conversant with big data analysis tools. Employees are expected to exhibit prowess in the management of big data.

There are many big data tools you will need, to handle big data. However, a technical person will be required to handle such data analysis tools.

To become one of the competent employees, learning to use these tools is essential. In this article, I will show you some of the best data tools you need.

Furthermore, I will show you the skills you need to master to become a skilled data scientist. With these techniques, you will surely be able to secure a good job in most of the big companies.

Here are some of the top 21 best big data tools;

1. MongoDB

MongoDB is the best big data tool, this data tool is fully automated and elastic. With this tool, your team can get any resource they need, whenever they need it. This is attributed to its ability to automate infrastructure provisioning, set up, and deployment.

Additionally, you will be able to modify your cluster in just a few clicks with no downtime window required. Its best uses include; storage of data from mobile apps, product catalogs, and content management systems.

Features

- It is capable of IP whitelisting.

- It allows for encryption both at rest and transit.

- Moreover, it has continuous backups for your data.

- Lastly, it recovers data in time.

2. Apache Drill SQL.

This tool allows experts to work on interactive analyses of large scale datasets. It was designed by apache to scale 10000+ servers. In addition, it is capable of processing petabytes of data and millions of records in seconds. Lastly, drill supports data locality thus a good idea to co-locate datastore and drill on the same nodes.

Features

- Firstly, it supports NoSQL databases.

- It can join data from multiple data stores with a single querry.

- It supports data locality.

- It supports HBase, MongoDB and Google cloud storage.

- Additionally, it is designed with a JSON data model.

3. Apache Lucene.

This tool is a Java library that provides powerful indexing and search features. Apache Lucene is distributed under a commercially friendly Apache software license. Its goal is to provide world-class search capabilities.

Features

- It’s capable of conducting advanced data analysis.

- Secondly, it has tokenization capabilities.

- It has spellchecking capabilities.

- It has configurable storage engines.

- It has powerful, accurate and efficient search algorithms.

- Lastly, it provides python bindings.

.

4. Apache Solr.

Solr is distributed under the Apache software license. This tool is highly reliable scalable and fault-tolerant. Additionally, this tool has a centralized configuration. Furthermore, it drives the navigation and search features of many large internet sites. Solr publishes many well-defined extension points that make it easy to plugin both index and query time plugins. Lastly, Solr supports both schema-less and schema modes, contingent upon your goal.

Features

- Firstly, it is optimized for high volume traffic.

- Secondly, it is highly fault-tolerant and scalable.

- It is flexible and adaptable with easy configuration.

- Near real-time indexing.

- Solr has powerful extensions-ships with plugins for indexing rich content such as PDFs and Word.

5. Improvado

This tool has been built for marketers to get all their data into one place. With this tool, you have the option of viewing your data on the improvado dashboard. You can as well channel your data into a visualization tool such as excel or looker. This tool saves time. Interestingly, all your market data can be connected within minutes to Google Spreadsheet or Tableau.

Features

- It has an automated marketing dashboard.

- Secondly, it is customizable.

- It has a customer journey mapping system.

- It has an automated marketing data pipeline.

- Lastly, it has an automatic performance metric calculation.

6. Storm

Storm data tool makes it easy to reliably process limitless streams of data, doing for real-time processing what Hadoop did for batch processing. This tool is easy to set up and use. Furthermore, it guarantees your data will be processed. Interestingly, this tool integrates with database technologies you already use.

Features

- Firstly, the storm data tool is first.

- It is fault-tolerant.

- It is easy to operate.

- Lastly, it is programming language agnostic.

7. Octoparse

This tool can be used to extract data from many websites without coding. It will satisfy the needs of both business owners, first-time users as well as experienced users. Octoparse has task templates to ease the use of first-timers. Additionally, this tool allows users to capture data without task configuration. Moreover, this tool is scheduled to extract data in the cloud at any time at any frequency.

Features

- It has an automatic IP rotation.

- 6-20x faster extraction.

- You can scrape and obtain data at the Octoparse cloud platform 24/7.

- It is easy to use.

- Additionally, it deals with all websites.

- Lastly, you can download scraped data as CSV, Excel or API.

8.RapidMiner

RapidMiner offers a strong track record of helping organizations cut costs, drive revenue, and avoid risk. This tool can be used in various industries including healthcare, financial services, eCommerce, and manufacturing. This tool will use a large amount of information generated in the industry to optimize decision making. RapidMiner is a fully transparent, end-to-end data science platform. Furthermore, this tool uses machine learning and model deployment to increase data work productivity.

Features

- It is designed with a graphic user interface.

- It has multiple data management methods.

- Additionally, it has application templates.

- It is designed with in-memory, in-database and in-Hadoop analytics.

- It has a powerful visual programming environment.

- Lastly, it efficiently builds and models better models faster.

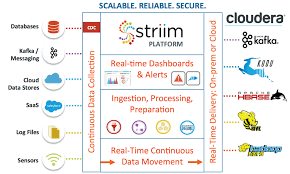

9. Cloudera Big Data Analysis tool

Cloudera was found in 2008 by some of the brightest minds in Silicon Valley’s leading companies including google, yahoo, and Facebook. In addition, this tool is used by many leading industries to grow their business, improve lives, and advance human achievement. The top industries include financial services, healthcare, technology, and manufacturing. Moreover, Cloudera provides an enterprise data cloud for any data, anywhere from Edge to AI.

Features

- Firstly, it has automated deployment and readiness checks.

- It has service and configuration management.

- It has client configuration management.

- It has Cloudera navigator data management.

- Lastly, it supports CDH 4 and 5.

10. Tableau Public

This is an interactive data visualization tool. This big data tool is distinguished by its drag and drop features that make data analysis at ease. Interestingly, this tool does not allow coding. You can easily create stunning interactive visualizations on their free platform. Additionally, the tableau public can connect Microsoft Excel and Microsoft Access. However, this tool is limited to 10 gigabytes of storage data.

Features

- Firstly, you can customize visualizations from your browser.

- You can explore topics with hashtags.

- In addition, you can find inspiration from your activity feed.

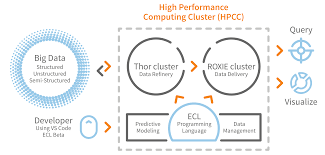

11. HPCC Systems big data tool

HPCC Systems‘ big data tool combines different data easy and first. This system allows you to acquire, amend, and deliver information faster. This will save you time and money. Additionally, this tool will make use of less time connecting systems and more time developing features.

Feature

- HPCC System has built-in data enhancement and machine learning APIs.

- Secondly, it has increased responsiveness to customers and stakeholders.

- It runs on the commodity hardware and in the cloud.

- It is scalable to many petabytes of data.

- Furthermore, it is an open-source data lake platform.

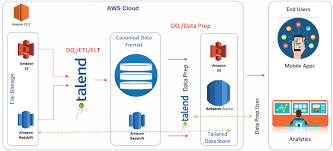

12. Talend

Talend Open Studio is an open-source project for data integration based on Eclipse RCP. This tool is used to integrate operational systems for business intelligence and data warehousing and for migration. Furthermore, it is the most open, innovative, and powerful data integration solution on the market. Lastly, this tool can be used to integrate data in both large and small organizations.

Features

- It has a meta-driven design and execution.

- It has real-time debugging.

- Robust execution.

- In addition, it has graphical development.

13. Karmasphere

Karmasphere Studio and Analyst is a product of the leading Big Data Intelligence Software Company MENLO PARK, Calif, Karmasphere™. This tool is for big data professionals, developers, technical and business analysts, working with the rapidly growing class of extremely large data sets. It also provides comprehensive solutions to large organizations to quickly and easily build and deploy applications designed to get the most out of their data.

Features

- It creates new SQL queries from other team members.

- Secondly, it allows you to save and share query histories.

- Filter and sort query results using flexible criteria, generating re-usable SQL “behind the scenes”

- Format and visualize results into pie, line, bar, scatter and other chart types for additional analysis.

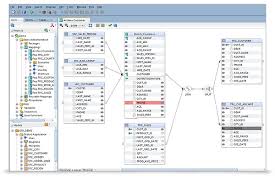

14. Oracle

Oracle data tool supports the integration of different platforms. It is the best option for the relational database as it is easy to set up. This is an outstanding tool attributed to its high security to integrate private information such as credit cards.

Features

- Firstly it is designed with Oracle loader for Hadoop.

- Secondly, it has Oracle XQuery for Hadoop.

- Additionally, it built with Oracle R Advanced Analytics for Hadoop.

- Lastly, it has an Oracle Data source for Apache Hadoop.

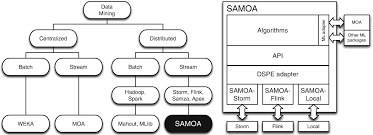

15. Apache SAMOA

Apache SAMOA (Scalable Advanced Massive Online Analysis) is a big data tool where you can extract big data streams. This tool can be used for programming abstractions to develop new algorithms. Additionally, it can be used to carry out classification, regression, and clustering. Furthermore, with this tool, you will only develop a distributed streaming ML algorithm once. This can, therefore, be executed on several other distributed stream processing engines.

Features

- It has no system downtime.

- Secondly, you can run everywhere after initial programming.

- Lastly, it does not require a complex update process.

16. Apache Spark

This is a fast and general-purpose cluster computing system used for large scale data processing. It writes applications quickly in Java, Scala, Python, and SQL. Interestingly, it exhibits great performance in batch and real-time data. Additionally, this tool can be run anywhere including Hadoop, Apache Mesos, stand-alone, or in the cloud.

Features

- It can perform tasks up to 100x faster than Hadoop’s MapReduce.

- Secondly, it facilitates scheduling.

- It supports multiple languages.

- It has advanced analytics.

- Additionally, spark supports streaming data.

- Lastly, it supports Machine Learning (ML).

17. Apache Hadoop

Apache Hadoop is the most used data tool in the big data industry. It allows for the distributed processing of large data sets across multiple computers using simple programming models. This tool is designed with the capability of detecting and handling failures at the application layer. Additionally, this tool consists of four parts that include: Hadoop Distributed File System (HDFS), MapReduce, YARN, and Libraries.

Features

- Hadoop brings flexibility in the data processing.

- It is fault-tolerant.

- It has a high-speed data processing.

- It has the ability to store and process any amount of data quickly.

- Furthermore, Hadoop is easily scalable.

18. Neo4j

Neo4j is a big data tool used by data analysts and data scientists. In this tool, data and relationships are stored natively together with performance improving as complexity and scale grow. This leads to server consolidation and incredibly efficient use of hardware. This tool plays a key role in data storage.

Features

- It is scalable and reliable.

- Secondly, it is flexible as it does not need a schema or data type to store data.

- It supports acid transactions.

- Additionally, it can integrate with other databases.

- Lastly, it is highly available.

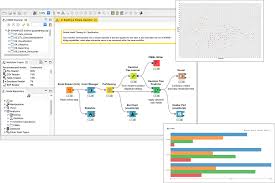

19. Knime

KNIME stands for Konstanz Information Miner. This tool is easy to use and conversant with big data problems. Moreover, this tool can be used for reporting, integration, and report. However, Knime can be blended with Hadoop for even more good results. With this blend, you will have sophisticated data mining, advanced data analytics, and SQL style big data querying. This tool supports Linux, OS X, and Windows operating systems.

Features

- Firstly, it can connect to popular Hadoop distributions.

- Secondly, it has data blending capabilities.

- It provides for big data extensions.

- It can integrate with multiple tools such as legacy scripting.

- Lastly, Knime has a rich algorithm set.

20. Qubole

This platform provides services that reduce the time used to run Data pipelines, Streaming Analytics, and Machine running workloads on any cloud. You will lower your cloud data lake cost by 50% using this tool. This data platform is independent as it learns, manages, and optimizes big data on its own. Additionally, it has famous users which include Warner music group, Adobe, and Gannet. However, this tool is subscription-based and paid. It is also best used by large organizations with multiple users.

Features

- It has real-time analytics.

- It has a drag and drop interface.

- It has customizable reporting.

- It has predictive analytics.

- Lastly, it is designed with Ad hoc reporting.

21. R

R big data tool is used by many scientists and business leaders to make powerful business decisions. R framework uses the Deploy R server, Deploy R repository, and Deploy R API’s to upload data. With this tool, you can upload files in different formats. Additionally, the R framework can be linked to multiple languages including JAVA, C, and Fortran. However, these tools have drawbacks that include speed, memory management, and security.

Features

- R has strong graphical capabilities.

- Secondly, it can perform complex statistical calculations.

- It can be used for machine learning.

- It can handle a variety of structured and unstructured data.

- It supports data handling operations.

- Lastly, it is compatible with other data processing technologies.

Conclusion

This article shows data tools to make powerful decisions for your business. This can be time-consuming, costly, and tiresome if done manually. Using a good data tool will make your work easier.

These different tools also have features that will give the best results for your company’s decision making. I believe that I have listed most of the top big tools for your data.

However, these tools have different features and can be used for either small or big organization’s data. Having a look at these features will help you find the best tool for your use.